In the move to the Microsoft 365 cloud, there always have been a gap in order to get external data into your search index. In on-premises you were able to use Business Connectivity Services (BCS) in order to get external data into your SharePoint Search index. Microsoft announced the general availability of Microsoft Graph Connectors. With Microsoft Graph Connectors we can connect external data and let Microsoft Search index this data, so it will be searchable. Microsoft delivers connectors by default to some cloud or on-premises data like Azure SQL, File shares, ServiceNow, etc. There are also a 100+ 3rd party connectors available to other data sources and you can also develop your own connectors using the Microsoft Search APIs to ingest the data into the index.

In this blog I will use a default Microsoft connector to Azure SQL and discuss some of my findings using this connector. More info on the general setup of an Azure SQL connector can be found here.

Azure SQL connector setup

The Microsoft Docs documentation for setting up a connector to an Azure SQL database is pretty good. It describes the steps to take with some great tips. There are some optional and required steps. For instance you could setup Access Control Lists (ACLs) in order to security trim your data to users. This is optional and requires some setup in your SQL table design. One of the required steps is to implement the authentication to your Azure SQL database, which the indexer will use during the crawls over your data.

Authentication setup

The recommended way is to register an App in Azure AD, create a client secret and use this information during the setup of the authentication in the connector. Next to this you will need to give this principle at least read rights to your database. You do this by running a SQL script like this:

CREATE USER [app name] FROM EXTERNAL PROVIDER

exec sp_addrolemember 'db_datareader', [app name]' Or 'ALTER ROLE db_datareader ADD MEMBER [app name]NOTE: [app name] should be equal to the name of the registered App in Azure AD

Another option is to use an Azure SQL user and connect to the Database directly. This is great for quick and easy setup for testing purposes. Since the GA of the connectors I was not able anymore to use a SQL login during the Azure SQL connector setup.

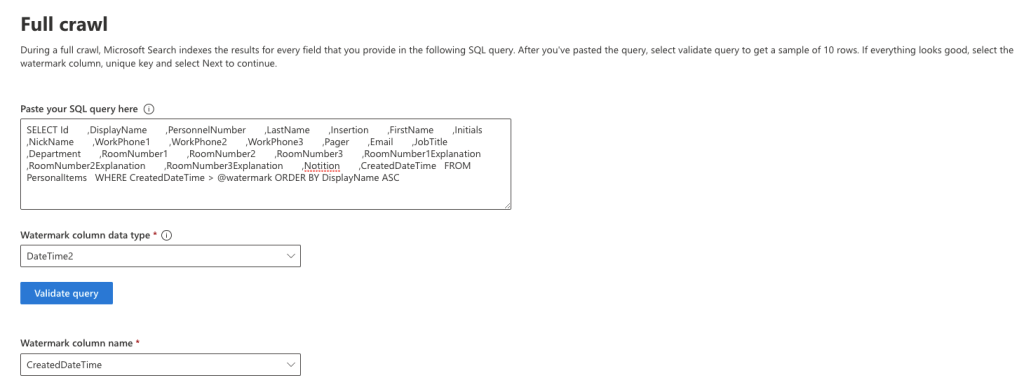

Full crawl and incremental crawl setup

In order to be able to setup full and incremental crawls within your connector, it is necessary to have a so called watermark column available in your data. See this as a created and modified column. With these columns both crawls will only crawl data which is added or modified after the last crawl. This will prevent overloading the database and is a mechanism to control data refresh for full crawls. Within the connector, for both the full as the incremental crawl setup you can specify which column in your Azure SQL table will be your Watermark column.

The Watermark column can be of a DateTime or Integer kind of datatype. In my example I used DateTime2 datatype. In order to create a CreatedDateTime and ModifiedDateTime column and automatically fill these columns with data during data creation, I created a default for the Created column and a SQL Trigger for the Modified column:

Columns in my Create Table script:

[CreatedDateTime] DATETIME2(3) CONSTRAINT DF_PersonalItems_Created DEFAULT (SYSDATETIME()),

[ModifiedDateTime] DATETIME2(3) CONSTRAINT DF_PersonalItems_Modified DEFAULT (SYSDATETIME())

Trigger:

CREATE TRIGGER updateModified

ON dbo.PersonalItems

AFTER UPDATE

AS

UPDATE dbo.PersonalItems

SET ModifiedDateTime = SYSDATETIME()

FROM Inserted i

WHERE dbo.PersonalItems.Id = i.IdThis way I can use these columns as Watermark during setup. In the Select statement for the Full Crawl I used below Where clause for the Watermark:

WHERE CreatedDateTime > @watermarkAnd for the Incremental Crawl:

WHERE ModifiedDateTime > @watermarkAssign property labels

There is not much documentation around the ‘Assign property labels’, but according Microsoft it can improve the search relevance and ensure more accurate results. I asked if I can assign properties to certain labels, even if they are not really appropriate. This is not really the case. You should only assign properties to appropriate labels. So you could assign a property called DisplayName for instance to the Title label. In my case this was the only appropriate property to assign. When you actually use the connector more and more in search, the results should become more accurate. I was able to see some progress here.

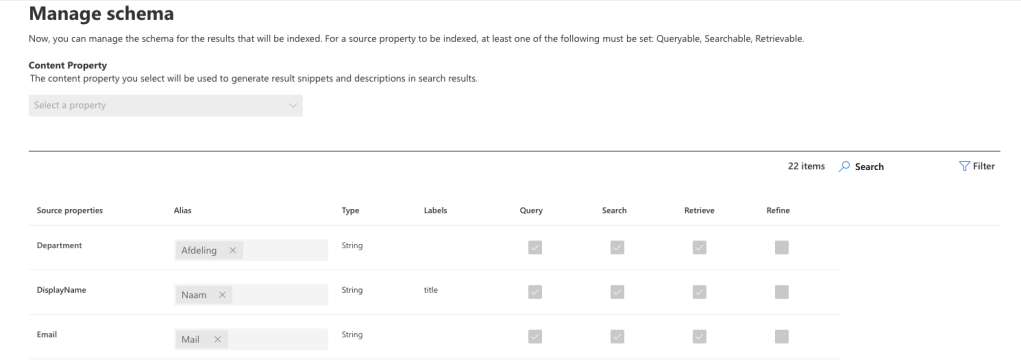

Manage schema

For each column you use in your Select statement in the Full crawl setup, a property is created in the Search schema. For each property you can assign an Alias and you can tell if the property is queryable, searchable, retrievable or refinable. Note that the External items at this point in time are not refinable yet and only selectable for Datetime properties.

The other steps in the connector setup are optional and I won’t go through them here. You could find more information about them in the documentation.

What’s next?

When you have setup the connector, the next step could be to setup a result type and vertical for it, so you could display the search results in SharePoint Online. I went another way and wanted to use the external data in my own application and use Microsoft Search API to search my data. Before I go into more details about how to use the connector through the Microsoft Search API, I would like to point out the licence requirements needed in order to be able to use the Microsoft Graph Connectors.

Licences

Microsoft created a short overview about the licence requirements and pricing. When you use a connector to index your data, each record in the index needs some storage and needs to be processed by the crawlers. This requires a licence. Any Microsoft 365 or Office 365 license allows you to view the data. The storage of the index needs an index quota. Graph connectors index quota is available via:

- Entitlement built into Microsoft 365 or Office 365 E5 licenses

- Purchase of add-on index quota (through billing page -> purchase services, in the Microsoft 365 Admin Center)

Each Microsoft 365 of Office 365 E5 licence includes 500 index items (quota). So when you have 1000 E5 licences, you are able to index 500.000 items (records). When you don’t have any E5 licences, you should buy an add-on index quota licence, which will enable your to index up to 1 million items. The maximum number of items you are able to index is 7 million at this moment. It is expected these numbers will be stretched later on.

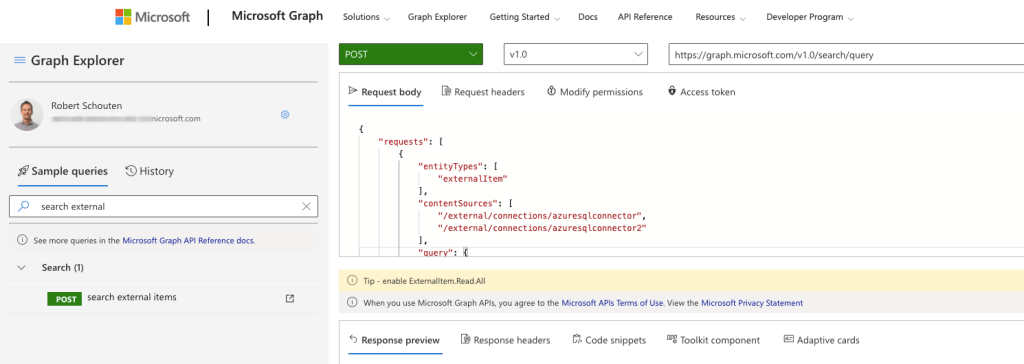

Microsoft Search API

A good starting point to start playing with your indexed data through the Microsoft Search API, is by the use of the Microsoft Graph Explorer. Search for the sample query ‘search external’ on the left hand side and you can start off using the the Microsoft Search API.

You can make a Post http request to the endpoint ‘https://graph.microsoft.com/v1.0/search/query’. The request body you post to this endpoint, should contain the following object data with searchRequests:

{

"requests": [

{

"entityTypes": [

"externalItem"

],

"contentSources": [

"/external/connections/azuresqlconnector",

"/external/connections/azuresqlconnector2"

],

"query": {

"queryString": "*"

},

"from": 0,

"size": 100,

"fields": [

"FirstName"

]

}

]

}It contains a searchRequest array object, with information about your request. This implies you are able to send several requests in once to the Microsoft Search API endpoint. One of the limitations at the moment is that you can only supply 1 searchRequest at a time. Another limitation for externalItem entityTypes, is you can not Sort your data. So you always get back your data with relevance sorting.

A nice option though is that you can specify more then one contentSource (connectors). Limitation here is they all should be externalItems. So you cannot combine externalItem and SharePoint Items for instance.

Conclusion

With the introduction of the new Microsoft Graph Search Connectors, Microsoft created new ways to ingest data, which lives outside your Microsoft 365 tenant, into the Microsoft Search index. There are some out of the box connectors from Microsoft, which you can use right away. This makes it possible to ingest data from (Azure) SQL, File shares, Web sites and more. Next to this there are 100+ 3rd party connectors to your convenience or you could develop and write your own custom connectors. In the past we had opportunities using Business Connectivity Services (BSC) to ingest external data to search. The Microsoft Graph Connectors are the modern way in Microsoft 365. It opens up a lot of new opportunities and scenarios what you can do with this, most of the time siloed, data. When the data is ingested into the Microsoft Search index, you are able to create new Search verticals and Result types, so you are able to use the out of the box search features in Office 365, Bing and Office.com. Next to this you can use the Microsoft Search API to use the data into your custom applications.

What do you think about it? Leave a comment!

February 5, 2022 at 3:33 pm

Good article and overview, thanks for sharing. One update, Viva Topics licenses also include the 500 item quote grant. Feel free to reach out if you have questions or topics related to Graph connectors.

LikeLike

February 5, 2022 at 6:57 pm

Thank you Brian and glad to be of help. Good addition about the Viva licences. I will update the article.

LikeLike

February 5, 2022 at 6:59 pm

One question though. At a client I use the soft delete option in the Azure SQL connector. Since last week, the items won’t be removed from the index when it meets the soft delete condition. Any thoughts?

LikeLike